Only 8% of Legal Teams Care About Quality. Really?

LDO survey result implies that tracking quality of legal outcomes is less important than tracking legal spending, but this can't be true. What's going on?

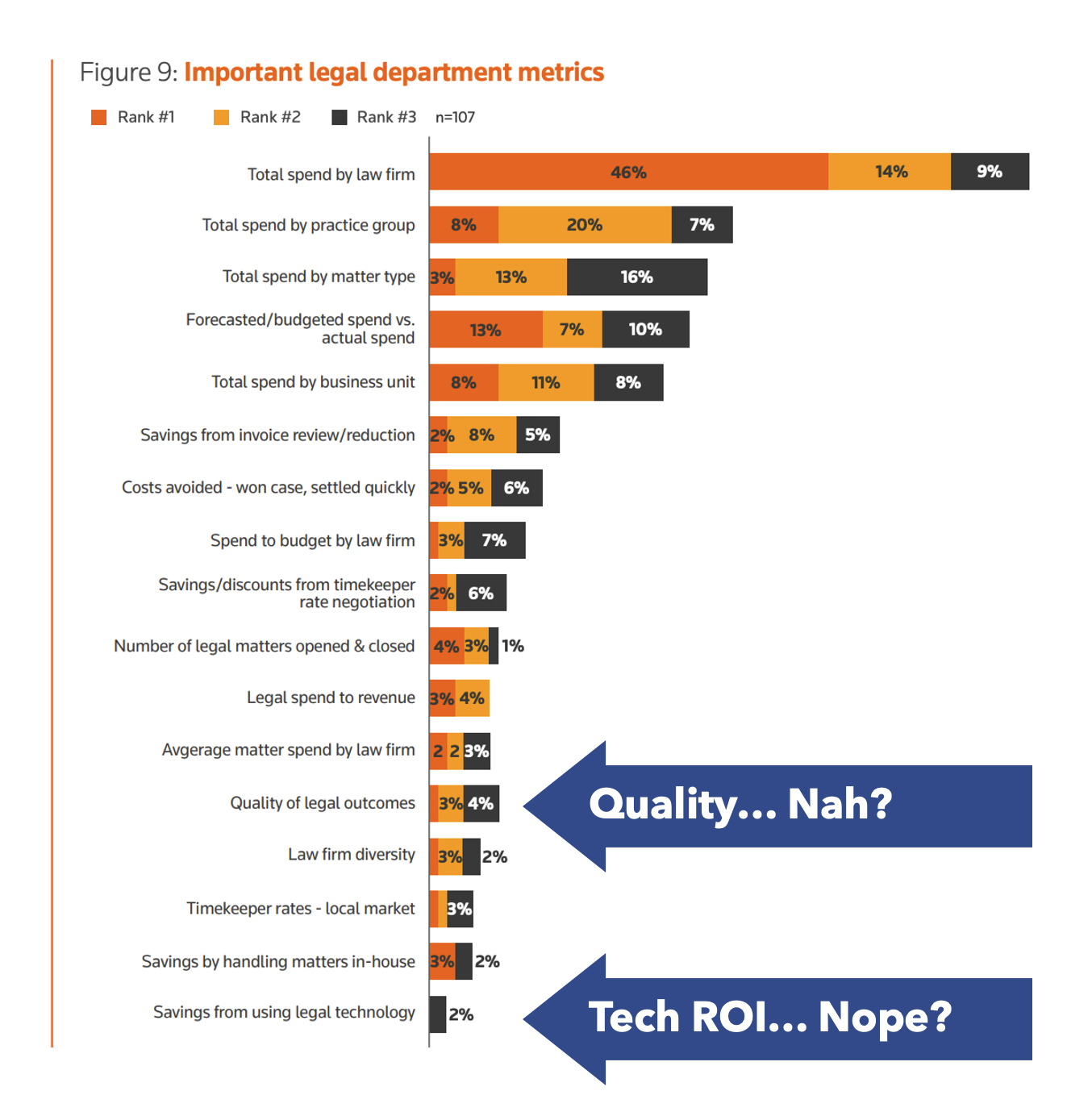

Tracking quality is not a priority. That is one possible conclusion to draw from the latest Legal Department Operations Index, published by Thomson Reuters. When asked to rank the metrics most routinely reported on by legal, a mere 8% included quality of legal outcomes in their top 3. By contrast, around 70% are tracking total spend by law firm, while other spending measures make up most of the top ranking metrics. So, what's up with this data? Is legal really ambivalent about quality?

Intuitively, this seems wrong. Imagine going to the GC and saying "We're tracking what our outside firms are churning out, and who's the cheapest. We've got no idea if we can trust their work, but we don't care about quality, do we?" No GC is going to say "Sure, sounds great." No GC will choose the cheap option if it means high risk and problems down the road. Of course quality matters.

One explanation for this data is the audience. If the respondents to this survey were disproportionately made up of spend management and pricing people, they would naturally report spend related metrics before most other things, since that's what they personally deal with.

Another explanation is that quality of legal outcomes is hard to measure, and therefore it is tracked far less frequently than things that are easy to measure, like spending. If this is true, it represents a huge opportunity for legal teams and legal ops. As data about legal work product becomes more accessible, there are new opportunities to measure quality and therefore to track quality metrics. With tools like Catylex enabling the extraction and analysis of data from contracts, for example, it's possible to track and score deals according to how well they conform to, or deviate from, a corporate playbook. This can now be used as an input to quality measurement. Firms that consistently produce above average scores for contract negotiations can now be recognized and potentially rewarded.

Similarly, large scale document review projects are very well-suited to quality measurement. If you need to extract data across tens or hundreds of thousands of documents, it would be wise to compare the cost, speed and quality of different methods, mixing and matching law firm, ALSP and software providers. Going with the lowest price per document without testing quality could expose you to bad data and substantial risk. Doing some measured evaluation of accuracy and error rates using different pools of documents can provide useful metrics about how best to distribute the work. If you discover that software works best for 70% of the data, ALSPs for 20%, and law firms for 10%, then you can design a review project accordingly, and achieve maximum bang for buck.

One other metric that jumps out of this survey is "savings from using legal technology." A teeny-tiny 2% of legal teams have this as a priority metric. Given the hype around legal tech in recent years, it's surprising so few teams are tracking its impact. Doing more to track metrics around legal tech ROI is important for legal departments and legal tech vendors alike. If your metrics are bad, this will come out eventually. But if your metrics are good, then tracking and reporting the benefits will help to accelerate adoption of the technologies that truly deliver.