AI Autopilots Are Amazing, But Only If They’re Trustworthy

Why AI autonomy is the metric that matters

Despite all the hype, successful AI projects seem to be thin on the ground. Recent reports suggest that most businesses (other than software companies) see little to no impact on productivity from AI. Even though it makes many tedious tasks easier, and reports look slicker, these benefits are undermined by a growing body of ‘work slop’ that wastes the time of all of its unfortunate recipients. AI is producing good stuff and bad stuff and, at first glance, it’s not easy to tell the difference.

Searching for Successful AI

So how do we find AI applications that deliver real business value? How do we prioritize AI projects that boost productivity, rather than undermining it? One answer is to pay more attention to human intervention rates, and whether they are lowered in meaningful ways. Put another way, how much busy work can AI perform autonomously, without a giant human checking layer, and without a material increase in risk?

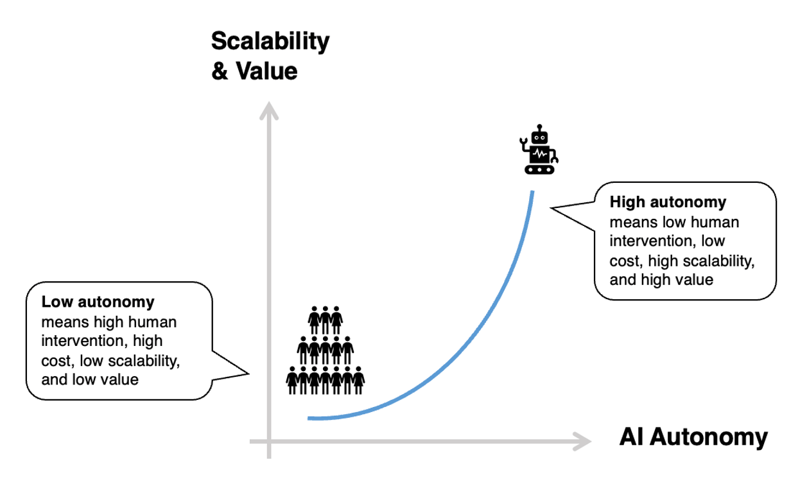

AI Autonomy and Why it Matters

Broadly speaking, AI can be deployed with varying degrees of autonomy:

Low autonomy, human-in-the-loop (HITL)

These are AI solutions where a human is always involved, and the AI assists them (aka “AI copilot”). Given the tendency for generative AI to hallucinate and make things up, HITL solutions are extremely common, with humans and AI working together to check each other’s work.

Medium autonomy, human-on-the-loop (HOTL)

These are AI solutions where some part of a workflow or workload is performed autonomously by AI, but human experts get looped in to resolve problems or risks based on triggers or exceptions. Effective HOTL solutions depend upon reliable intervention triggers (there must be some indication that the AI needs help), and their value is highest when the human intervention rate is low.

High autonomy, human out-of-the-loop (HOOTL)

These are AI solutions where work is performed by AI with no human intervention (aka “AI autopilot”). HOOTL solutions require extensive testing appropriate to their risk profile and will likely be more narrowly focused for this reason. But when HOOTL AI performs well at scale, the benefits can be spectacular.

The Disappointment of Copilots

Given the lack of productivity impact in most economic metrics, it’s quite likely that HITL is to blame. As Sequoia partner Julien Bek wrote recently, the fastest growing companies in 2025 were copilots. They sold AI tools to existing professionals to make those professionals better at their jobs, but also with the expectation that those professionals would own the work product and catch the mistakes. So long as the AI is making mistakes, and human judgment is adding something AI lacks, this copilot HITL approach makes sense.

The Promise of Autopilots

But what happens when the AI starts to perform better than human professionals in autopilot mode? According to Mr. Bek, the autopilots will be the true disrupters. These will be the companies that solve the customer problem in a fully automated way and do it faster and cheaper than any tech-enabled services firm did in the past.

While I agree that software companies masquerading as services firms are the future, I’d argue that full “autopilot” AI is less important than a high autonomy score. Autopilot implies that the AI solves a problem or provides a service in full autonomy mode, with no human input. This is a nice goal, but what really matters is that human input is low, not that it is zero. The lower the human input, the less people you need, the cheaper the service, and the more scalable the service. This is the disruption that matters.

Whether you aspire to be such a next wave services firm, or you’re simply a business chasing better ways of doing things, the AI you seek is the same. If you can flip work tasks to fully autonomous AI, then you should, because it will be faster and cheaper than people, and what’s left over will be less boring for those people. If you can split tasks into autopilot and copilot chunks, then you should, provided that you’ve got reliable guard rails shaping the split. This last point about guard rails to steer issues for human attention is both critical and non-trivial.

Autopilots Are Not Easy

Julien Bek mentions legal AI company Harvey as an example of a copilot player now “moving quickly to autopilot”. It may be a smart move for Harvey to do this, but I doubt that it will be quick or easy. They’ve been playing the copilot game because it was the low hanging fruit of legal AI, and the least disruptive. But to become an autopilot player you need to solve the problems of hallucination and consistency, which is much trickier than slapping a legal veneer on ChatGPT. You need the guardrails that consistently flag things for expert human attention, and that confidently assure you the autopilot work is in safe autonomous hands.

Do Autopilots Work for Legal?

Let me give an example of legal AI that can safely operate with high autonomy: contract portfolio analysis. If I want to know all the good and bad things that might be lurking inside my portfolio of 100,000 contracts, I have two options. I hire an army of experts to read them. Or I deploy AI analytics to extract structured data automatically from all the legalese. Obviously, the second option is better, but only if I can trust the results. And this is the problem for the likes of Harvey. They may extract correct data much or even most of the time, but with their heavy LLM reliance, they frequently skip things, they frequently make things up, and it’s very hard to tell which stuff is good and which is bad. This is not something you can run on autopilot. Autopilots only work if you trust them.

The solution is to build the extra layers of AI that overcome the weaknesses of LLMs. At Catylex, for example, we’ve built proprietary, deterministic, analytical AI layers that extract data from contracts with zero chance of hallucination. When we combine this with LLMs we can run 100,000 contracts through our pipeline and tell you specifically which results can be trusted (the autopilot chunk), and which results might be a little flaky (the copilot chunk). It’s not full autopilot, but it doesn’t need to be. The speed and cost benefits are proportional to the chunk of work that is on autopilot, and that is a very large chunk.

Human Factors Matter

The other thing to think about with autopilot AI is whether there are human factors that make a task better suited to a human touch. There’s not much need for human touch when extracting data from a giant pile of contracts. But if you’re negotiating a contract with a new customer, there’s an important relationship dimension that will benefit from human nuance. You do not want an LLM negotiation tool shooting off a draft contract drenched in a bloodbath of aggressive redlines. Without a human copilot to monitor the work, redlining tools risk sending all the wrong signals to a customer about how easy you are to work with.

Give Your AI Projects an Autonomy Score

What does all this mean in practical terms? When you’re looking to solve a business problem with AI, make sure you really understand where any solution sits on the autonomy scale. A simple approach is to score the solution on a scale of 0-100, where the number indicates the percentage of work that can be achieved in a fully autonomous way. A score below 25 would imply low autonomy, and high human involvement. A score above 75 would imply high autonomy, and low human intervention rates. Anything in between is medium autonomy, and moderate human intervention. This scoring should be done based on actual testing and benchmarks, rather than optimistic numbers plucked out of thin air.

Armed with this information, you will know that certain tasks can be done quickly and reliably and will deliver something of immediate value. If the expected value of what you get from autopilot mode is much higher than the project costs, the ROI will be clear and compelling. By contrast, anything with a low autonomy score will likely be slower, more costly, and at greater risk of a disappointing ROI.

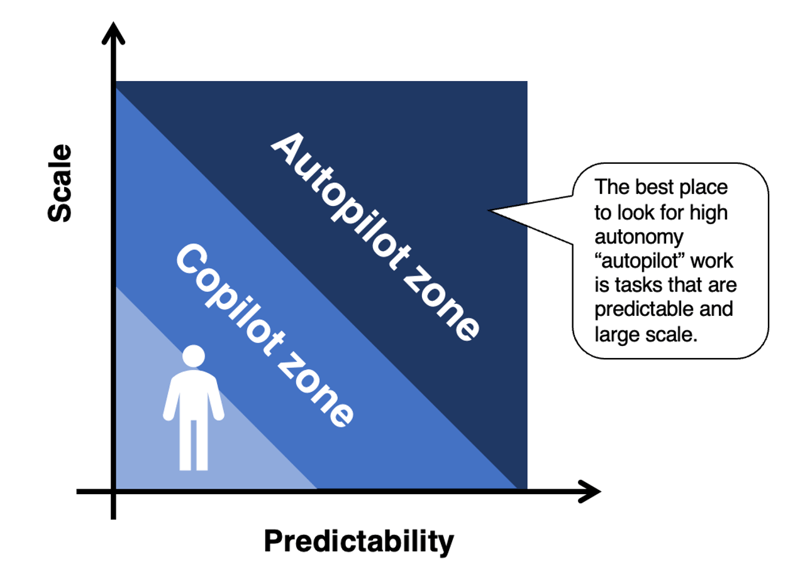

Scale Matters

What sort of work lends itself to autopilot mode? Julien Bek talks about work with low levels of human judgement. Two other factors are scalability and predictability. If tasks are highly predictable, they can be automated with less risk of messy scenarios that require human input to resolve. And if tasks occur at scale, the value of automation is much higher, both in terms of speed and cost savings.

Of course, it never hurts to remember that automation is not an end in itself, but rather a way to solve business problems better than before. The best problem to solve is not the one that proves some point about what’s possible with AI. The best problem to solve is the one that is (or soon will be) causing the most pain to your business.